La oferta llegó como un mensaje privado en un foro que ya casi nadie usaba.

“Compro cuentas antiguas de cast.fm. Historial verificable. Pago por canción reproducida.”

Al principio pensó que era spam. Pero no era el único. En el mercado gris de identidades culturales, una cuenta con más de veinte años de historial musical era más valiosa que muchos de los dudosos perfiles financieros que podían comprarse para obtener un crédito.

Las plataformas de recomendación del 2041 habían convertido el gusto musical en una huella cognitiva estable: una forma de biometría blanda. Los algoritmos de contratación, seguros médicos y emparejamiento social valoraban la coherencia estética de una persona con la misma precisión con la que antes se analizaban los patrones de sueño o los hábitos de navegación web, uso de teléfonos…

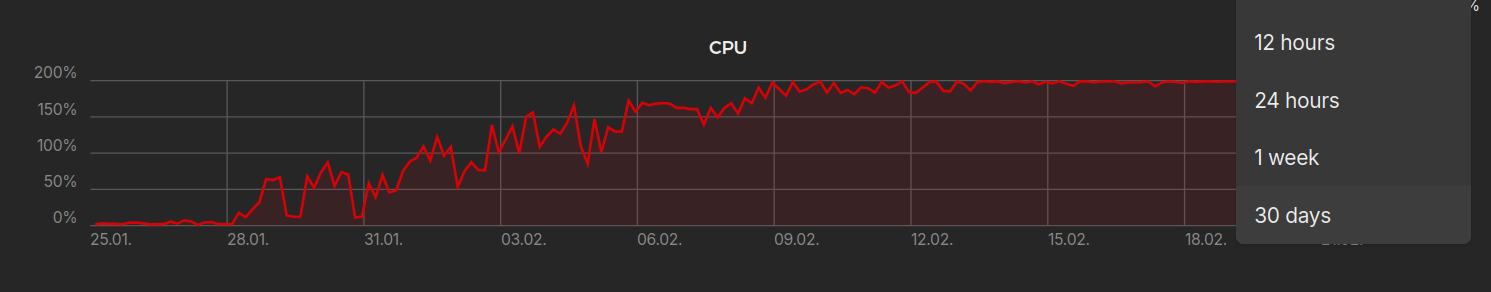

Su cuenta tenía 497.112 temas reproducidos almacenados en los servidores de cast.fm.

Abierta en 2003. Migrada tres veces. Rescatada de dos bancarrotas de la empresa matriz.

Toda una vida registrada canción a canción.

El comprador pidió pruebas criptográficas de propiedad. Luego envió un contrato inteligente. El precio era absurdo y, sin embargo, exacto:

1 criptodólar por scrobble.

497.112 criptodólares.

Aceptó.

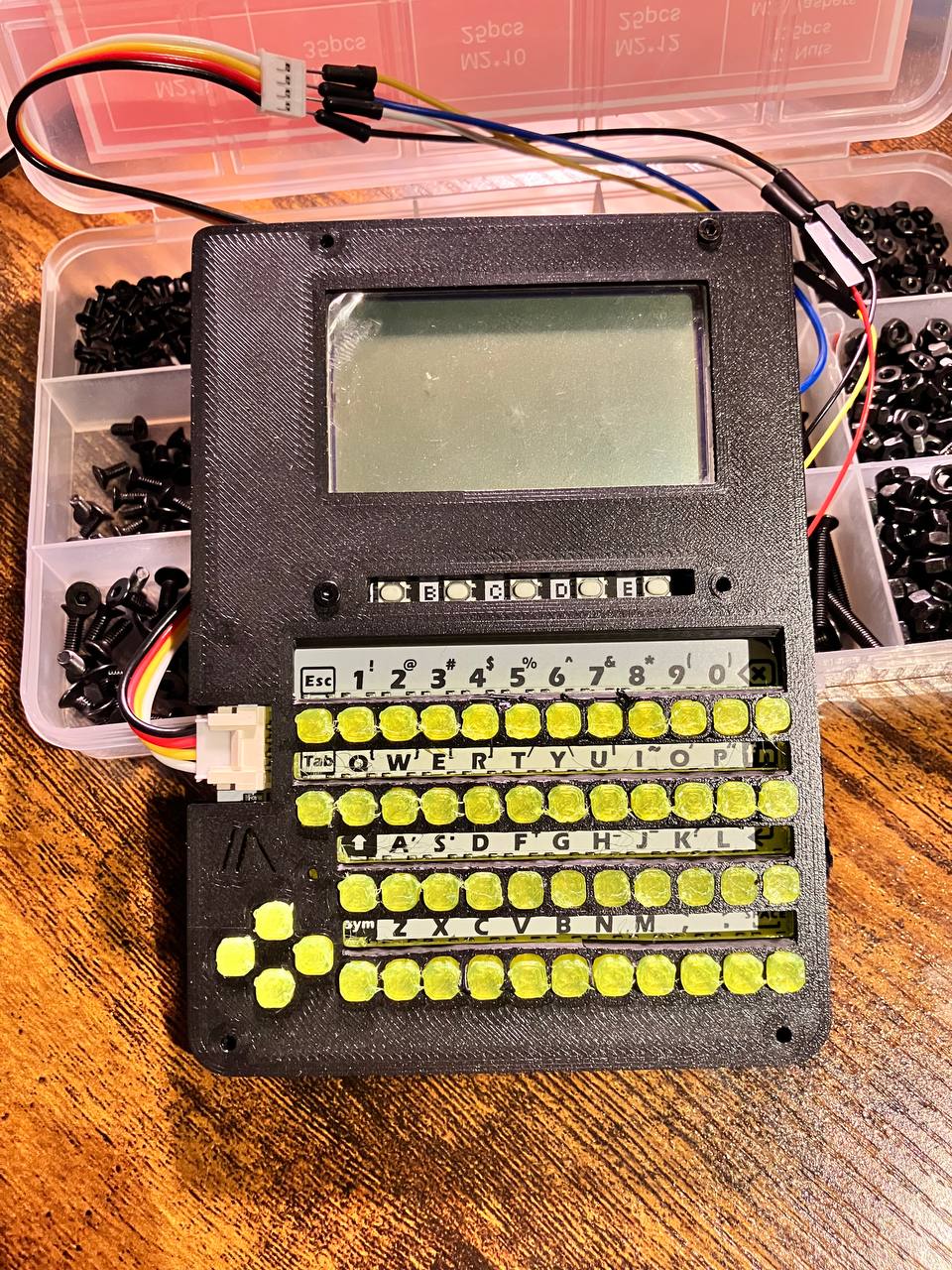

El proceso de transferencia fue quirúrgico. El comprador exigía acceso total: historial completo, listas de reproducción privadas, metadatos de escucha nocturna, registros de skip. También solicitó una exportación de los patrones de escucha pasiva recogidos por dispositivos IoT: altavoces, coches, relojes, incluso el viejo implante auditivo que había usado durante unos años.

Necesitamos coherencia narrativa, explicó el intermediario. No vendemos la cuenta. Vendemos la identidad.

El dinero llegó en menos de un minuto. Criptodólares regulados, trazabilidad limpia. Con esa cifra podía resolver más de un problema.

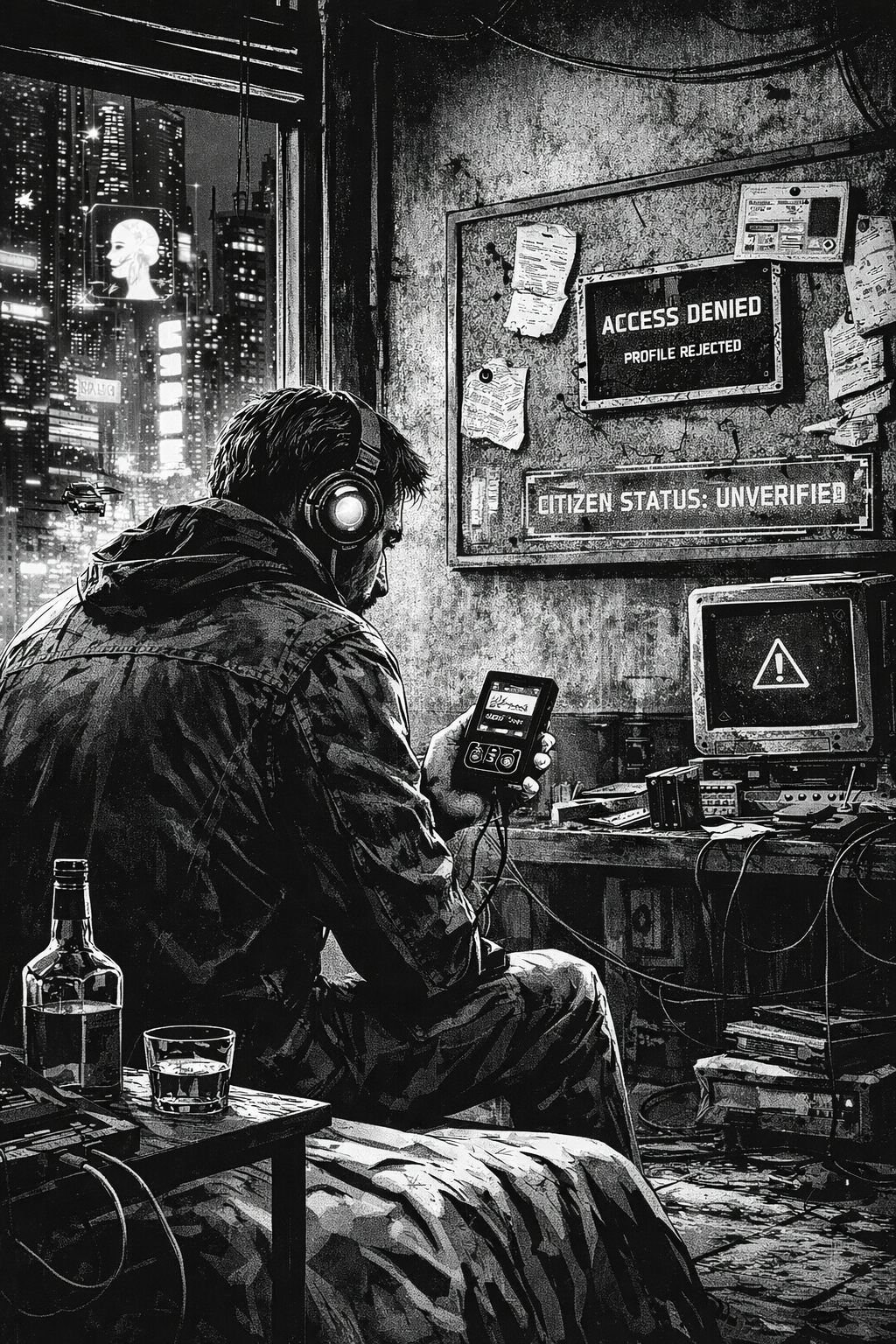

Durante dos días sintió alivio. Al tercero empezó a recibir notificaciones.

Primero, recomendaciones musicales que no eran suyas. Después, correos de servicios que no recordaba haber contratado. Luego, una denegación automática de acceso al transporte público: perfil cultural inconsistente con su historial biométrico.

Intentó entrar en su cuenta de correo. Bloqueada.

Intentó acceder al sistema sanitario. Error de identidad.

Intentó comprar comida. Rechazo por “anomalía de patrón conductual”.

El problema no era que hubiera vendido su cuenta.

El problema era que, en 2041, su cuenta era él.

El comprador había integrado los 497.112 canciones en un modelo de personalidad de alta fidelidad. Ese modelo ya estaba siendo usado para entrenar sistemas de recomendación de élite, asistentes ejecutivos y avatares sociales. Su identidad auditiva, refinada durante dos décadas, se había convertido en una plantilla premium.

Y la plantilla ahora pertenecía a otro.

Los sistemas de verificación cruzada detectaron la discrepancia: el cuerpo seguía siendo el mismo, pero el rastro cultural había sido transferido legalmente. Sin ese rastro, su perfil social se degradó. Los algoritmos no sabían quién era. Peor: sabían quién no era.

El comprador empezó a usar la cuenta públicamente.

Nuevas canciones.

Nuevos patrones.

Nuevas decisiones.

Poco a poco, la plantilla se alejó de su comportamiento real. Las IA de perfilado concluyeron que él era una copia defectuosa del original. Un clon cultural de baja calidad.

Una semana después, recibió una notificación final del Registro de Identidades Sintéticas:

“Su coherencia histórica es insuficiente. Perfil degradado a ciudadano no verificable.”

Intentó contactar con el comprador. Sin respuesta.

Intentó recomprar la cuenta. El precio ahora era diez dólares por canción.

Casi cinco millones.

La última noche, sentado en su apartamento sin acceso a servicios básicos, abrió un reproductor local, desconectado de la red. Reprodujo una canción que había escuchado por primera vez en 2004. No quedó registrada en ninguna parte. Ningún sistema la contó. Ningún algoritmo la asoció a su nombre.

Por primera vez en veinte años, escuchó música sin dejar rastro.

Y se dio cuenta de que ya no existía.

“La identidad es el conjunto de las cosas que recordamos haber hecho.” - Norbert Wiener